5 interesting advancements revealed for developers at the Google I/O keynote

Google’s 2025 I/O keynote was filled with many fascinating updates for developers. On top of the latest news about the brand’s Project Starline, Astra, and Mariner advancements, which have now all progressed beyond research phases, Google announced even more technology updates within its DeepMind library. Many that will be available for testing before they become more widely available. Here is some of what Google had to share.

Gemini 2.5 updates

Google detailed that developers have greatly enjoyed interacting with the already available Gemini 2.5 models, which allow them to build games and apps in a single shot. Noting that the brand released a preview version of Gemini 2.5 Pro to developers ahead of I/O, Google detailed that users have already been using the model in impressive ways and that it topped the leaderboards in popular LLM benchmarks, making it the brand’s most intelligent model to date. Google also unveiled at the keynote, Gemini 2.5 Flash, which it says excels in benchmarks, including reasoning code and long context, and ranks second to Gemini 2.5 Pro. Gemini 2.5 Flash is set to be generally available in early June, and Gemini 2.5 Pro will follow soon after. Both models are currently available for developers to test in AI Studio and Vertex AI, as well as paid users in the Gemini app.

Native audio output

Google announced some interesting text-to-speech previews at I/O, including a multi-speaker function that supports two voices within Native Audio output. The first-of-its-kind model can execute voices in different ways, showing expressive nuance, including switching to a whisper mid-speech, and switching to different languages. The model supports 24 languages and can go back and forth between two languages while keeping the same tone, such as English and Hindi, as demonstrated in the I/O demo. The text-to-speech capability is currently available in the Gemini API. The API will be available in a Gemini 2.5 flash preview version of native audio dialogue, allowing developers to build conversational experiences more naturally within the Gemini chatbot. This allows it to distinguish between the main speaker and background voices, so it knows when to pause and when to respond.

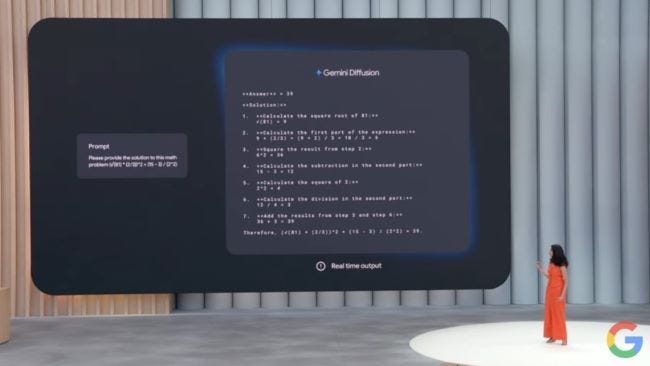

Gemini diffusion

Google discussed how it began experimenting with its diffusion techniques through its image and video models. Now it is bringing the technology to text models for editing functions, including math and code. The brand detailed that the new Gemini Diffusion model it announced at I/O, is a state-of-the-art experimental text diffusion that allows the model to view text not only left to right but up and down, which enables it to make corrections while it is still generating. This provides the model with a higher speed and much lower latency. The demo at the keynote showed the model generating a solution for a math problem within seconds. Google noted that the announced version of Gemini Diffusion is five times faster than its current fastest model, Gemini 2.0 Flash Light. The brand indicated that it plans to continue developing its diffusion technology to lower the latency in all its Gemini models and will soon launch a faster Gemini 2.5 Flash Light.

Deep think

Google shared details about a new mode for Gemini 2.5 Pro called Deep Think, which it explained was developed due to the fact that model responses improved when given more time to think. The mode has benefitted from Google’s extensive research into thinking and reasoning as well as its new developed parallel generation technique, also seen in Gemini Diffusion. The brand noted that Deep Think has received high scores in several performance tests. Because it is natively multimodal like other Gemini models, it excels on the leading benchmarks. Google said it is currently taking more time to do more safety evaluations on Deep Think and get further feedback from safety experts. However, it is making the mode, available to trusted testers via the Gemini API to get their feedback before making it widely available.

Universal AI system

Google discussed its aspirations to take Gemini from being the best multimodal foundation model to being what it calls a world model, which would allow the AI to function like the human brain, by making plans, imagining new experiences, and modeling aspects of the world. Having started with developments like Project Astra, the brand’s vision is to make Gemini into a universal AI assistant that includes native audio, improved memory, and added computer control. The brand showcased a research prototype, based on Project Astra seamlessly helping a person fix their bike and complete other tasks in a back-and-forth conversation with the AI and other people, while Gemini on a mobile app completed other tasks in the background. Google said it is currently gathering feedback about the functions from trusted testers, and aims to bring them to Gemini Live, to new Search experiences, Live API for developers, as well as additional form factors, such as Android XR glasses.