Google brings its best AI research, Starline, Astra, and Mariner, to its app suite

Google discussed many research developments at its I/O keynote, including Starline's 3D calls, Astra's universal AI vision, and Mariner's task automation.

Google shared more details on some of the ongoing AI projects it has developed over several years at its IO keynote on Tuesday. The company has shown a major shift toward artificial intelligence in this keynote, as most of its announcements focused on AI. Google started the event by updating developers on the progress of three ongoing technologies, Project Starline, Astra, and Mariner, detailing the current status of these developments and the upcoming services that will soon be available. Here’s what you need to know about what was shared.

Project Starline

Google has been developing Project Starline for several years, having announced it at a prior I/O conference. It's a 3D video conferencing technology intended to make you feel like you’re in the same room with another party, even if you’re in separate locations. The technology transforms a standard 2D video conferencing stream into a hyper-realistic 3D view using a custom video model that captures different angles of a subject from six cameras. The 3D feed works at 60 frames per second to ensure accuracy and an immersive and natural experience. The company has rebranded Project Starline as Google Beam and announced that it is collaborating with HP to bring the technology to devices later this year.

Google has also now implemented Starline technology into its Google Meet video conferencing app, allowing for real-time speech translation that mimics the tone, patterns, and expressions of speakers in the translated language in real time. Google is making English and Spanish translations available to Premium Meet subscribers now and will add more languages in the coming weeks. The real-time translation feature will also be available for Google Meet Enterprise later this year.

Project Asta

Google’s Project Astra is the brand’s effort to develop a universal AI assistant that can see, speak, and interpret the world around it. Google is integrating Project Astra technology into its Gemini Live camera and screen sharing feature, which will allow users to communicate with the AI tool about their surrounding atmosphere. Google CEO, Sundar Pichai, noted that testers have given positive feedback about the technology, having used the Project Astra inundated Gemini Live for tasks including practicing for a job interview and training for a marathon. A demo at the I/O keynote showed how Astra can correct users when they are improperly identifying objects, such as saying a garbage truck is a convertible or a streetlight is a skinny building, among others. Astra gently identified the speaker’s error and gave the correct answer. Gemini Live camera and screen sharing is now available on iOS and Android.

Project Mariner

Google has been developing its Project Mariner technology to expand its AI agents' ability to interact with and operate browsers and computer software. AI agents, notably, function independently and achieve tasks on behalf of their users, and Google released its Project Mariner option as an early research prototype in December 2024.

Noting the progress the brand has made since its initial launch, Google introduced a Multitasking feature powered by Project Mariner that can oversee up to 10 simultaneous tasks at once. It also introduced a feature called Teach and Repeat, which can observe a task once, learn it, and repeat similar tasks in the future. These Project Mariner functions will be available for developers through the Gemini API, with brands including Automation Anywhere and UIPath already utilizing the code. It will be more widely available over the summer.

Project Mariner will also power Agent-to-Agent communication, which will allow agents to talk to each other, as well as Agent-to-API communication, a technology introduced by Anthropic that will allow agents to be integrated with other services. Pichai noted that the Gemini SDK is now compatible with MCP (Model Context Protocol) tools, which will help bring more agentic capabilities to Chrome, search, and the Gemini app.

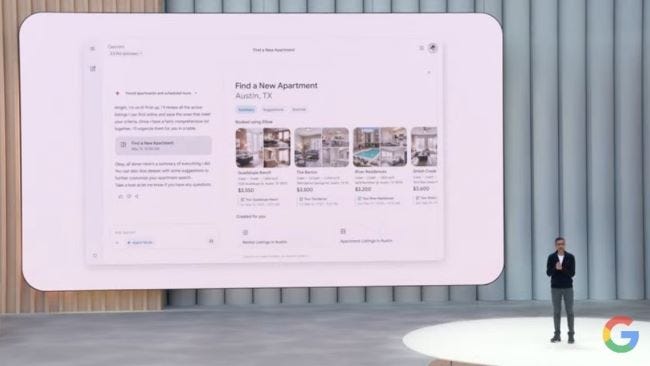

These advances will aid Agent Mode, a feature for the Gemini app that Google also showcased at the keynote. Through a demo, the brand showed how you can enter a prompt searching for an apartment hunt with a $1,200 budget and a washer/dryer requirement. With Agent mode, Gemini will search rental site listings, while Mariner will adjust the search results according to the necessary filters. If you find a desired listing, Gemini will use MCP to select the option and set up a viewing for you. It will continue browsing for listings until you end the search. Agent Mode will soon be coming to Gemini app subscribers in an experimental version.

The final Project Mariner-powered feature is called Personalized Smart Replies, which is an updated version of the Gemini Smart Replies feature in Gmail. Using a technology called personal context that will allow Gemini, with your permission, to access relevant information within Google applications, it can fashion a response to an email as if you wrote it yourself. The demo shows Pichai replying to an email from a friend asking for travel advice for a trip to Utah because he’s already visited. Personalized Smart Replies can search his old documents, such as travel itineraries, and other emails to get a grasp of how he typically drafts emails, including tone, style, and common words used to generate a draft. The email can be edited at will before it is sent off to the friend. Personalized Smart Replies will be available in Gmail this summer for subscribers.